Most discussions around generative AI focus on the “best” model, as if the creative process were a winner-take-all sprint. For content teams and creative operators building repeatable pipelines, this perspective is fundamentally flawed. In a production environment, quality is rarely the result of a single prompt; it is the result of a deliberate sequence of handoffs between specialized architectures.

Successful production isn’t about finding a magic bullet tool. It is about a model routing the strategic decision-making process of determining which latent space should handle the structural foundation, which should manage the surgical refinement, and which should govern temporal motion. Treating AI as a modular factory rather than a vending machine is the only way to move past the “uncanny valley” of generic generated content.

The Friction of Model Fragmentation in Content Pipelines

The current generative landscape is defined by “tab-bloat.” An operator starts a concept in a high-fidelity image generator, downloads the file, uploads it to a separate upscaler, moves it to a video animator, and eventually realizes the lighting in the third step has broken the logic established in the first. Each transition represents a loss of data and momentum.

Standardizing on a single model to avoid this friction often leads to “average” output. A model that excels at hyper-realistic skin textures might fail miserably at rendering legible typography on a billboard. Conversely, a model optimized for complex spatial reasoning might produce flat, uninspiring lighting. The cognitive load of switching contexts between isolated tools kills production velocity, yet the cost of using a generalist model for specialist tasks is a visible drop in asset quality.

There is also a hidden cost in manual file transfers: the loss of metadata. When you move an asset between disconnected platforms, you lose the prompt history, the seed values, and the ability to go back one step to adjust a mask without restarting the entire chain. For teams iterating on ad creatives at scale, these friction points accumulate into hours of wasted rendering time.

Phase One: Selecting the Base for Structural Integrity

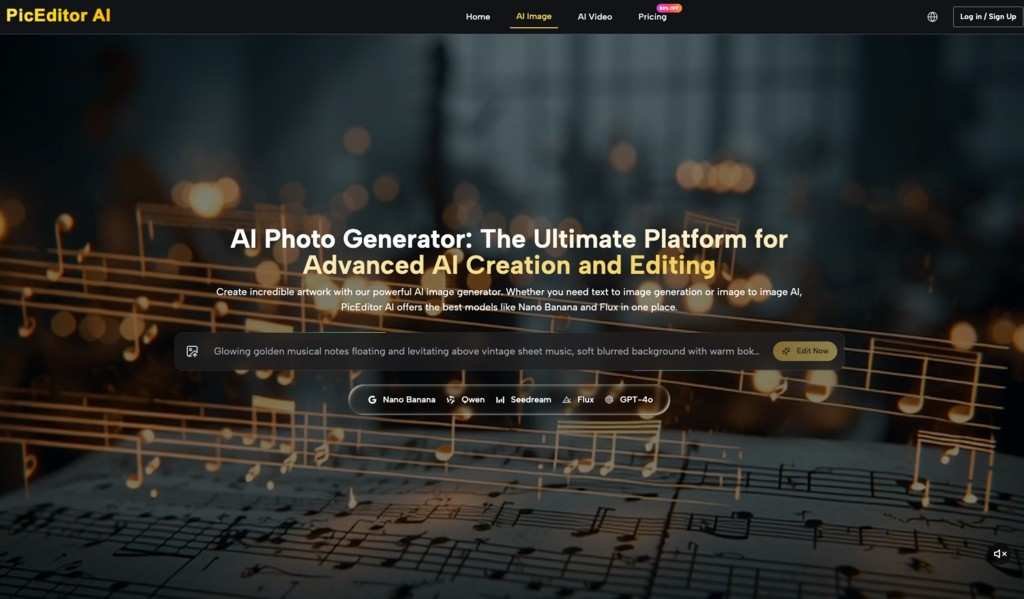

The first stage of any workflow is establishing the “base” image. The operator must decide which model prioritizes the specific constraints of the brief. If the project requires heavy branding or specific text elements, Flux is currently the industry standard for typographic accuracy. Its ability to follow complex, multi-subject prompts what we call “semantic adherence”—ensures that the foundational layout doesn’t need to be fought in later stages.

If the goal is stylistic flexibility or a more “painterly” aesthetic, models like Nano Banana or Seedream are often more responsive to nuanced stylistic prompting. They offer a different kind of latent flexibility that handles textures and lighting moods with a less rigid adherence to photographic realism.

The choice here is about “structural integrity.” You are looking for the model that best captures the geometry and composition of the scene. It doesn’t need to be perfect; it needs to be correct in its proportions and depth mapping. Attempting to fix a fundamental perspective error during the animation or upscaling phase is nearly impossible.

Phase Two: Refinement via Strategic Image Editing

Once the base is established, the workflow moves from generation to surgical modification. This is where most generic AI content fails: the creator accepts the first global output rather than refining local elements. A professional operator uses an AI Image Editor to handle object-level corrections that would be too risky to attempt through prompt-only iterations.

For example, if a model produces a perfect environment but a flawed character face, regenerating the entire image is a gamble. Instead, the operator uses a face-swap or inpainting feature to fix the specific area while keeping the surrounding lighting and geometry intact. This surgical approach preserves the “win” of the base generation while resolving the “fail” of the specific detail.

As the asset moves toward finalization, the role shifts toward technical polish. An AI Photo Editor acts as the critical bridge between a low-fidelity draft and a production-ready asset. This involves upscaling to remove diffusion artifacts, denoising shadows, and ensuring the color profile is consistent across a series of images. In this phase, the AI Photo Editor is less about creativity and more about technical compliance—ensuring the image meets the resolution and clarity standards required for high-definition displays or print.

Phase Three: The Handoff to Motion and Temporal Consistency

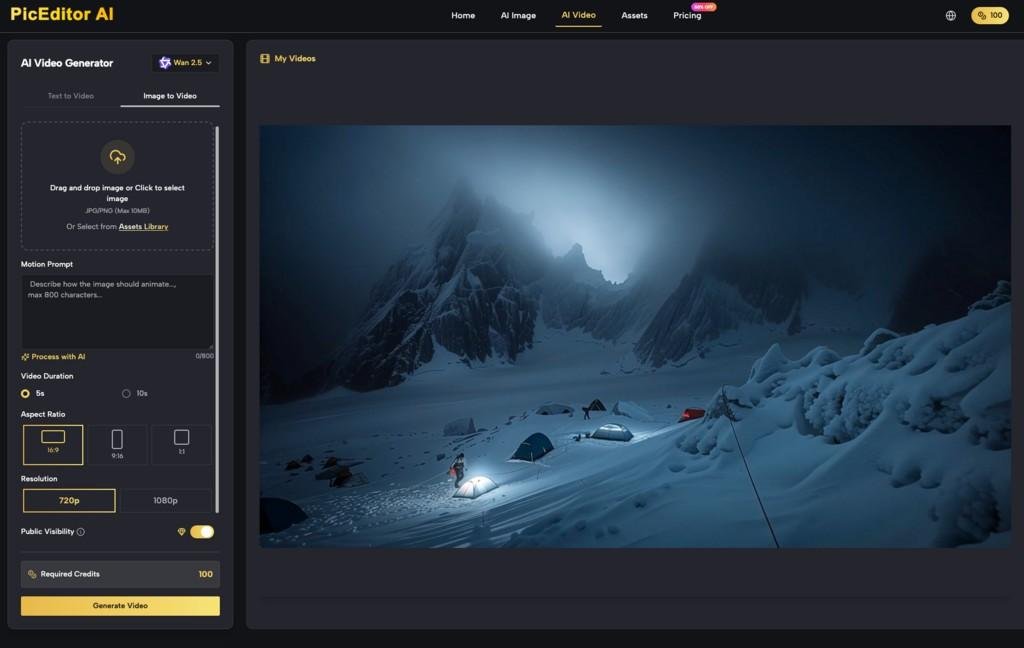

Transitioning from a static asset to video is the most volatile stage of the pipeline. The technical risk increases exponentially because you are now managing temporal consistency the ability of the AI to maintain a character’s features and environmental details across hundreds of frames.

The choice of motion model depends on the complexity of the desired physics. Kling has become a favorite for operators who need cinematic, heavy-weight motion that feels physically grounded. On the other hand, Google’s Veo or specialized models like Seedance might be chosen when the priority is smooth, fluid transitions or specific artistic movements that require more “gentle” inference.

The “First Frame Problem” is the primary hurdle here. A video model interprets the depth and texture of the static image to decide how it should move. If the static image contains ambiguous lighting or “flat” textures, the video model may struggle to calculate the 3D space, resulting in warping or “morphing” artifacts. Operators must often go back to the AI Image Editor to add depth cues like more distinct shadow casting or sharper focal planes to give the video model the data it needs to animate accurately.

Evaluating the Unified Workflow vs. The Best-of-Breed Stack

For a long time, the only way to achieve high-end results was to “daisy-chain” five or six different platforms. You would generate in one, upscale in another, remove backgrounds in a third, and animate in a fourth. While this allows for “best-of-breed” tool selection, it is an operational nightmare for teams.

Platforms like PicEditor AI are shifting the strategy toward a unified interface. By housing models like Flux, Kling, Seedance, and Nano Banana under one roof, the “routing” happens within the same environment. The efficiency gain isn’t just about avoiding tab-switching; it’s about the seamless passage of image data from the generation stage to the editing stage and finally to the animation stage.

When you can instantly pivot from a Flux-generated graphic to an AI Image Editor to remove an unwanted object, and then push that exact, cleaned-up asset directly into a Kling video render, the iterative loop shrinks from hours to minutes. This speed allows for more “versioning” testing three different motion styles for the same base image to see which one maintains the best temporal stability.

The Practical Limits of the Current Model Ecosystem

Despite the rapid advancement of these tools, we must be realistic about the current technical ceilings. The “universal model” that can handle every stage of production with equal competence does not yet exist, and it is uncertain if it ever will.

One persistent limitation is character consistency across different model architectures. If you generate a character in one model and then use a different architecture for the video phase, the “latent understanding” of that character’s face often shifts. While tools like face-swapping can mitigate this, they are often post-process patches rather than integrated solutions. We cannot yet conclude that any automated pipeline can replace the need for a human operator to perform manual “cleanup” on frames where the physics have broken down.

Furthermore, physics-breaking artifacts such as limbs disappearing or backgrounds liquefying remain unpredictable in long-form AI video generation. High-velocity camera movements still frequently cause “temporal flickering,” where the lighting or texture of an object changes frame-to-frame.

A strategic operator accepts these limitations as part of the current landscape. They don’t fight the model to do what it can’t; they route the asset to the tool that handles that specific failure best. If the motion model fails to maintain a background, the operator might use a background removal tool in the AI Photo Editor phase to isolate the subject and composite it over a stable, static background in traditional editing software.

Conclusion: The Operator’s Advantage

The competitive advantage in generative media no longer belongs to those who know the “best” prompt. It belongs to the operators who understand the topology of different models knowing where Flux ends and Kling begins, or when a surgical edit is more efficient than a full re-generation.

By viewing the AI Image Editor and the various motion models as specialized nodes in a broader network, content teams can build pipelines that are resilient to the quirks of individual architectures. The goal is to minimize friction while maximizing the unique strengths of each model, using a unified platform to maintain the “connective tissue” between the concept and the final, polished delivery. In this ecosystem, the most valuable skill is not just creation, but the strategic routing of the creative intent through the right technical path.